Content Pruning: How to Audit Your Blog & Decide What to Delete, Update, or Redirect

90%+ of all web pages receive zero organic search traffic (Forbes Advisor, 2026). 23% Year-over-year organic traffic increase reported after aggressive content pruning. 32% Traffic boost achieved in one documented case study from a structured prune. 6–12 Months — the recommended content audit frequency for any active blog.

Table of Contents

Table of Contents

The Blog That is Quietly Sabotaging Your Rankings

Every blog starts the same way. A business publishes articles. Topics are chosen for what seems relevant at the time — a trending news angle, an answer to a common client question, a keyword a competitor seems to be targeting. Weeks become months. Months become years. And the blog grows, quietly and organically, into something nobody planned for: a sprawling archive of hundreds of pages, many of which no longer serve any user, rank for any query, or contribute to any business goal.

This is not a failure of ambition. It is a failure of maintenance. And it has a measurable, compounding cost.

Google's crawlers allocate a finite budget to every website — a limited number of pages they will crawl and evaluate in any given period. When your site's index is populated with thin, outdated, duplicated, or irrelevant pages, Google spends that budget on content that yields no signal value. Your strongest pages — the ones that deserve to rank — get crawled less frequently, re-evaluated less often, and ultimately ranked less aggressively than they would be on a leaner, higher-quality site.

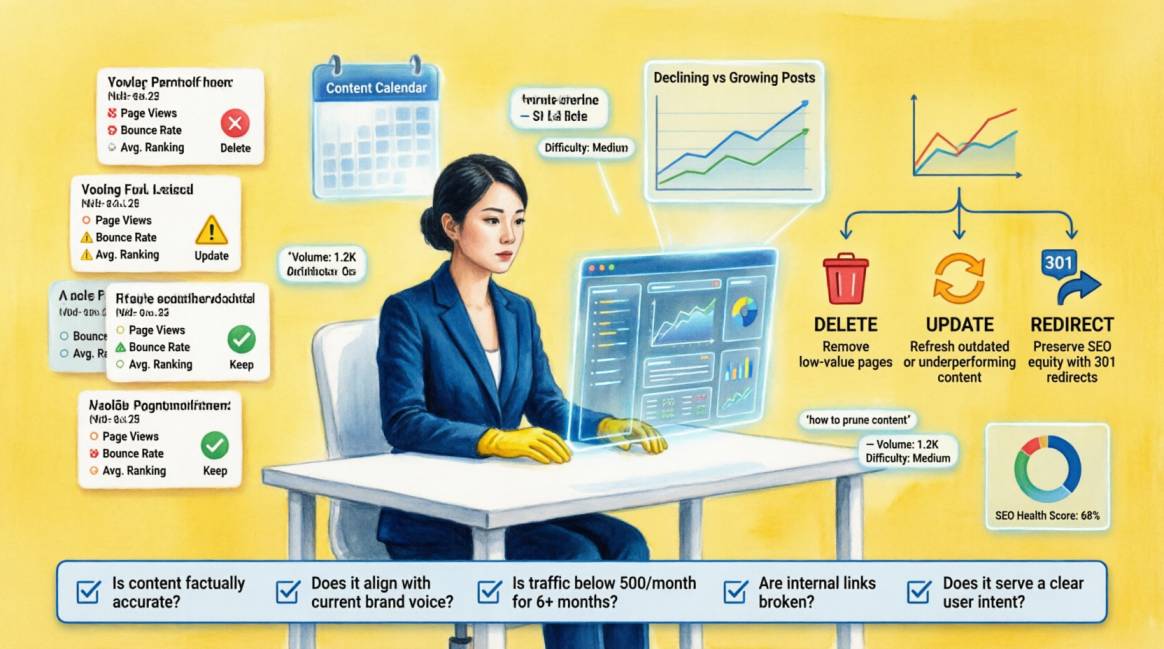

Content pruning is the systematic remedy. Not the panicked deletion of everything old. Not the indiscriminate culling of low-traffic pages. A structured, data-driven process of evaluating every page in your content inventory against a consistent set of criteria, assigning each one a decision — Keep, Update, Consolidate, or Delete — and executing those decisions in a sequence that protects your authority while eliminating the drag.

Done correctly, content pruning is one of the highest-ROI SEO activities available to any established website. Done incorrectly — deleting pages without redirects, removing high-backlink content without checking authority, mass-pruning without a recovery monitoring plan — it is one of the most reliable ways to trigger a significant, sustained traffic loss.

This guide gives you the complete framework: the audit methodology, the scoring system, the four decision types with their specific execution protocols, and the post-pruning monitoring plan that separates the teams who prune successfully from the teams who prune recklessly.

📌 What this guide covers:

- Why content bloat is an active SEO liability — not just a cosmetic issue

- The Semola Content Scoring Matrix: an 8-metric, 32-point system for making consistent, data-backed decisions on every page

- The four pruning decisions — KEEP, UPDATE, CONSOLIDATE, DELETE — with exact criteria and technical execution protocols for each

- The complete tool stack for running a content audit, including free and paid options

- How to sequence your pruning in batches to isolate performance changes and protect your strongest content

- The 90-day post-pruning monitoring framework with action triggers at each stage

- How content pruning directly improves AI Overview citation eligibility — the GEO angle most guides miss

- A practical content audit checklist and FAQ for teams at every stage of readiness

Why Content Bloat is an Active SEO Liability

“Every weak page on your site is paying a tax on every strong one”

The intuitive assumption about content is additive: more pages mean more opportunities to rank, more surface area for Google to evaluate, more reasons for visitors to stay. This assumption was partially true in the SEO environment of 2012. It is actively counterproductive in 2026.

Google's Helpful Content System — introduced in 2022 and significantly strengthened through 2024 and 2025 — evaluates content quality at the site level, not just the page level. A site where 40% of indexed pages are thin, outdated, or irrelevant sends a site-wide quality signal that suppresses rankings across all pages — including the strong ones. The weak content is not neutral. It is a negative weight dragging on the performance of everything else.

3 Mechanisms by Which Bloated Content Hurts Strong Content

Mechanism 1 — Crawl Budget Dilution

Google's Googlebot allocates a crawl budget to each domain based on its authority and server capacity. On a site with 500 indexed pages, if 300 of them are thin or stale, Googlebot spends a significant proportion of its budget evaluating content that yields no useful signal. Your 200 genuinely strong pages are crawled less frequently as a result. Content updates take longer to be re-evaluated. New content takes longer to be indexed. The cumulative effect is a site that responds more slowly to SEO work — not because the work is wrong, but because the crawl environment is polluted.

Mechanism 2 — Quality Signal Dilution

Google's quality assessment systems evaluate the composition of a site's indexed content, not just individual pages. A site with a high ratio of low-quality pages signals, at the domain level, that its content standards are inconsistent. This creates a quality ceiling that even strong individual pages struggle to break through. The practical effect: a website with 150 high-quality pages and 350 thin pages typically ranks worse, on average, than a website with 150 high-quality pages and 50 moderate-quality pages. Smaller is not inherently better — but cleaner is.

Mechanism 3 — Keyword Cannibalization

As blogs grow without editorial governance, it is common for multiple articles to target the same or closely related keyword intent. A content audit frequently reveals clusters of 3–5 articles all competing for the same query — none of them strong enough to rank because Google must distribute its evaluation across all of them and cannot identify a clear authority page. Each individual piece is weaker than a single consolidated, comprehensive article would be. Keyword cannibalization is simultaneously a content quality problem and a content architecture problem, and content pruning is the structural solution to both.

| 📊 THE BUSINESS CASE: WHAT THE RESEARCH SHOWS |

|---|

| Over 90% of all web pages receive zero organic search traffic — the majority of pages on most established blogs are invisible to search (Forbes Advisor, 2026) |

| Sites that conduct aggressive content pruning report an average 23% year-over-year increase in organic traffic to their remaining pages — because crawl budget concentrates on higher-quality content |

| One documented case study achieved a 32% traffic boost within 90 days of a structured content pruning exercise, without publishing a single new article |

| The Helpful Content Update (2022–2025) resulted in significant ranking loss for sites with high proportions of thin or AI-generated content — pruning directly addresses the underlying quality signal |

| Content pruning conducted quarterly for high-volume blogs and annually for lower-volume sites is the recommended maintenance cadence from leading SEO practitioners |

Building Your Content Inventory

You cannot make decisions about content you have not systematically documented

Before any decision is made about any page, you need a complete, structured inventory of every indexable URL on your site. This inventory is the master document that drives your entire audit — without it, decisions are made on impression rather than evidence, and the most consequential pages (the ones with high backlink counts or historical traffic spikes) are the easiest to overlook.

Step 1 — Export Your Full URL List

Your content inventory begins with a complete URL extraction. Use the following sources, combined into a single master spreadsheet:

- Google Search Console → Performance → Pages: export all URLs that have received at least one impression in the last 12 months. This is your 'known to Google' list.

- Screaming Frog crawl: run a full crawl of your domain (crawl depth unlimited for sites under 10,000 pages). Export all 200 OK URLs with status code, title tag, meta description, H1, word count, canonical tag, and internal link count.

- Your XML sitemap: export the URL list and cross-reference it with the Screaming Frog crawl — any URL in your sitemap that is not being crawled is a technical issue to address separately.

- Ahrefs Site Explorer → Best by Links: export the top 500 pages by referring domains — these are your highest-authority pages and must be explicitly identified before any delete decisions are made.

Merge all sources into a single Google Sheet with one row per URL. Deduplicate. Flag any URL that appears in one source but not others — these discrepancies are themselves diagnostic signals.

Step 2 — Populate Performance Data for Each URL

For each URL in your master inventory, collect and record the following data. These eight metrics form the basis of the Semola Content Scoring Matrix in Section 3:

| CONTENT INVENTORY DATA FIELDS — COLLECT BEFORE SCORING | |

|---|---|

| ☐ | Organic sessions (last 12 months) — from GA4, filtered to Organic Search channel, segmented by landing page |

| ☐ | Referring domains — from Ahrefs (URL-level backlink data, not domain-level). This is your most critical data point for the delete vs redirect decision |

| ☐ | Search Console impressions (last 12 months) — filtered by page URL |

| ☐ | Search Console average position — the ranking position for the URL's primary keyword |

| ☐ | Conversions attributed (last 12 months) — from GA4, any goal completion (form submission, WhatsApp click, purchase) attributed to this URL as a landing page |

| ☐ | Date last published or last substantially updated — from your CMS metadata |

| ☐ | Word count — from Screaming Frog's word count column (or a manual spot-check for key pages) |

| ☐ | Topical alignment — manual assessment: does this page belong to a core content cluster currently supported by your strategy? Score as: Core / Adjacent / Loose / Unrelated |

| ☐ | Non-negotiable: Do not score or make decisions on any URL until all eight data fields are populated. An incomplete data set produces unreliable decisions. If data is unavailable for a metric, note 'N/A' and weight that row's score accordingly. |

The Recommended Tool Stack for Your Content Audit

| Tool | Cost | Primary Use in Content Pruning |

|---|---|---|

| Google Search Console | Free | Impressions, clicks, CTR, average position per URL. The ground truth for which pages Google indexes and values. Export Performance data filtered by Page. |

| Google Analytics 4 | Free | Sessions, conversions, and engagement rate per landing page. Essential for identifying pages with traffic but no commercial value. Use Exploration reports for deep landing page analysis. |

| Screaming Frog SEO Spider | Free up to 500 URLs; £199/yr for full | Full site crawl: status codes, title tags, meta descriptions, H1s, canonical tags, word count, internal link count. The master inventory tool for any content audit. |

| Ahrefs Site Audit / Site Explorer | Paid (from $129/mo) | Referring domain count per URL — the most important data point for delete vs redirect decisions. Also identifies orphaned pages, broken internal links, and duplicate content. |

| Semrush Site Audit | Paid (from $139.95/mo) | Identifies thin content, duplicate content, and keyword cannibalization across your URL inventory. Useful complement to GSC data. |

| Google Sheets / Excel | Free | Your audit master spreadsheet. All tool exports merge here. Columns: URL, sessions, impressions, referring domains, word count, last updated, score, decision, action status. |

| Wayback Machine (archive.org) | Free | Check what old content looked like before deciding to delete — occasionally reveals that a page with low current traffic had historical significance or evergreen potential worth restoring. |

Semola Content Scoring Matrix

“A consistent, 32-point scoring system that removes subjectivity from pruning decisions”

The most common failure in content pruning is inconsistency: different team members applying different standards to different pages, resulting in decisions that reflect individual preferences rather than objective data. The Semola Content Scoring Matrix solves this by converting your eight data points into a numerical score for every URL — producing a consistent, comparable, objective basis for decisions across your entire inventory.

Score each URL on all eight metrics using the criteria below. The maximum score is 32 (8 metrics × 4 points each). The minimum is 8 (1 point for each metric).

| Metric | Strong (4) | Adequate (3) | Weak (2) | Poor (1) |

|---|---|---|---|---|

| Organic sessions (last 12 months) | > 500/mo | 100–499/mo | 10–99/mo | < 10/mo |

| Referring domains (backlinks) | > 10 domains | 4–10 domains | 1–3 domains | 0 domains |

| Search Console impressions | > 5,000/mo | 500–4,999/mo | 50–499/mo | < 50/mo |

| Ranking position (primary keyword) | Top 10 (page 1) | 11–20 (page 2) | 21–50 (pages 3–5) | 50+ or none |

| Conversions / leads attributed | 5+ per quarter | 1–4 per quarter | Occasional | None recorded |

| Content freshness (last updated) | Within 6 months | 7–18 months ago | 19–30 months ago | > 30 months ago |

| Content depth vs top competitors | Longer + better | Comparable | Shorter | Significantly thinner |

| Topical alignment with strategy | Core topic cluster | Adjacent topic | Loosely related | Unrelated / legacy |

Interpreting Your Scores — The Four Decision Bands

| Score Range | Decision | Action |

|---|---|---|

| 27–32 | 🟢 KEEP AS-IS | Strong performer. No intervention required. Flag for annual review only. |

| 21–26 | 🔵 UPDATE | Good bones, declining performance. Refresh data, deepen content, improve on-page signals. High ROI intervention. |

| 14–20 | 🟡 CONSOLIDATE | Weak standalone performer that may share a topic with other pages. Evaluate keyword cannibalization. Merge and redirect to a stronger parent page. |

| 8–13 | 🔴 DELETE + REDIRECT | Page is draining crawl budget and diluting site quality. If it has zero backlinks: 410 Gone. If it has any referring domains: 301 redirect to the nearest relevant page. |

Important nuance: The scoring matrix is a guide, not a law. A page scoring 12 that has 15 referring domains should never be deleted regardless of its score — the backlink authority is too valuable. Always apply the backlink override rule: any page with 5 or more referring domains requires individual review before any delete decision, regardless of its total score.

The Four Pruning Decisions — Criteria and Execution

“Each decision has a specific technical execution protocol. Knowing the criteria is only half the job.”

Once every URL in your inventory has a score, you have a prioritised list of decisions to execute. The four possible decisions — Keep, Update, Consolidate, Delete — have distinct technical execution requirements. Executing the right decision incorrectly is as costly as making the wrong decision in the first place.

🟢 Keep Decision:

When to use this decision

Pages scoring 27–32. Strong organic traffic, multiple referring domains, high Search Console impressions, good rankings, and topical alignment with your current strategy. These pages require no immediate intervention — they are working as intended.

How to execute

No changes to URL, content structure, or internal linking. Add to your 'protected pages' list — ensure these URLs are never accidentally affected by site migrations, CMS changes, or template updates. Schedule an annual review to refresh statistics and ensure content remains accurate. Internally link new content to these pages where topically relevant — they are your highest-authority assets.

🔵 Update Decision:

When to use this decision

Pages scoring 21–26. The page has a valid topic, some organic presence, or meaningful backlinks — but its content is outdated, too thin, or outranked by fresher competitor content. The core asset is salvageable; it just needs investment. This is typically your highest-ROI decision category: you already have the URL, the backlinks, and some Google familiarity — you just need to improve the content to unlock better rankings.

How to execute

Refresh all statistics and data points that have changed since publication. Expand content depth: identify the top 3 competing pages for the primary keyword and ensure your updated version matches or exceeds their depth. Update the publication date in your CMS only after making substantial content changes — updating the date without improving the content is a deceptive signal Google can identify. Strengthen the on-page SEO: review title tag, meta description, H1, internal linking, and schema markup. Add or update FAQPage schema for Q&A content. Submit the updated URL for re-crawling via Search Console URL Inspection.

⚠️ NEVER: Changing the URL when updating content — this breaks all existing backlinks and internal links. Update the content at the existing URL. If the URL is genuinely problematic (e.g., contains a year like /best-seo-tools-2021/), create a new evergreen URL and 301 redirect the old one.

🟡 Consolidate Decision:

When to use this decision

Pages scoring 14–20 that share a topic or keyword intent with one or more other pages. Consolidation addresses keyword cannibalization by merging multiple weak pages into a single, authoritative resource — and redirecting the merged pages to the new master URL. This preserves any backlink equity from the merged pages while creating a stronger unified asset.

How to execute

Identify the canonical target page: the URL with the most referring domains and highest traffic — this becomes the master page. Extract the best content from each page being merged: statistics, examples, unique sections. Rewrite the master page to incorporate the best elements from all merged pages — the result should be meaningfully longer and more comprehensive than any individual source page. Set up 301 redirects from every merged URL to the master URL. Update all internal links pointing to merged URLs so they point directly to the master URL. Submit the master URL for re-indexing. Monitor the master URL's impressions growth in Search Console over 30–60 days.

⚠️ NEVER: 301 redirecting merged URLs to the homepage. This wastes the link equity of the merged pages. Always redirect to the most topically relevant page — in this case, the consolidated master article.

🔴 Delete Decision

When to use this decision

Pages scoring 8–13 with zero or near-zero referring domains, no meaningful traffic, no conversions, and no topical alignment with your current strategy. These pages are draining crawl budget and diluting site quality without contributing anything in return. Deletion removes the quality drag and allows Google to concentrate its evaluation on your strongest content.

How to execute

Check referring domains one final time before deleting — even pages with very low scores occasionally have backlinks from authoritative sources not captured in your data. If the page has any referring domains (even 1–2): implement a 301 redirect to the nearest relevant live page rather than deleting outright. If the page has zero referring domains and zero traffic: use a 410 Gone response rather than a 404 — Google removes 410 pages from its index faster than 404s, which is exactly what you want for content you are permanently retiring. Remove all internal links pointing to deleted URLs. Update your XML sitemap to exclude all deleted URLs. After executing, run Screaming Frog to verify no remaining internal links resolve to the deleted URLs.

⚠️ NEVER: Deleting pages with 5+ referring domains without implementing a 301 redirect — this permanently destroys the link equity those domains represent. A 301 to a relevant alternative page preserves approximately 90–95% of that equity.

Sequencing & Batching Your Pruning

“How you execute matters as much as what you decide”

One of the most important operational decisions in any content pruning project is how to sequence the execution. The instinct — driven by the desire to clean everything at once — is almost always wrong. Mass execution of pruning decisions makes it impossible to attribute performance changes to specific actions, eliminates the ability to course-correct mid-project, and amplifies the risk of an error affecting too many pages simultaneously.

The correct approach is phased, batched execution with monitoring periods between each phase. This approach allows you to validate that each batch of changes is producing the expected improvement before proceeding to the next — and to catch any unexpected consequences early enough to correct them without cascading damage.

Recommended Pruning Sequence

- Phase 1 — Delete: Begin with your delete list. These are your lowest-risk decisions because the pages have no traffic and no backlinks. Deleting them produces an immediate crawl budget benefit with zero authority risk. Execute in batches of no more than 50 pages per week. Verify 410/404 responses after each batch with a Screaming Frog crawl. Wait 2 weeks and observe Search Console coverage changes before proceeding.

- Phase 2 — Consolidate: Execute your consolidation decisions after the delete phase has stabilised. Consolidation is more complex — it requires content merging, redirect implementation, and internal link updates. Work cluster by cluster: complete all redirects and internal link updates for one topic cluster before moving to the next. Verify every redirect with a manual check and a Screaming Frog list crawl. Allow 3–4 weeks of monitoring before the next phase.

- Phase 3 — Update: Execute content updates on your mid-performing pages after Phase 1 and Phase 2 are complete. Updates benefit from the improved crawl environment created by the delete and consolidate phases — your updated pages will be re-crawled more efficiently on a cleaner site. Prioritise updates on pages with the most referring domains and the highest historical traffic.

- Phase 4 — Verify Keep: Review your 'keep' pages one final time after Phases 1–3 are complete. Verify that no kept page has become an orphan due to internal link changes made during earlier phases. Confirm that kept pages are the primary beneficiaries of the improved crawl budget — they should be seeing increased crawl frequency in GSC Crawl Stats.

⚠️ Pruning Mistakes That Cause Irreversible Damage

- Deleting high-backlink pages without a 301 redirect: the referring domain equity is permanently destroyed, not recovered

- Mass-deleting everything at once: eliminates your ability to diagnose which changes drove which performance shifts

- Redirecting all deleted pages to the homepage: creates soft 404 signals and wastes link equity on an irrelevant destination

- Updating publication dates without substantially updating content: Google identifies this as a deceptive freshness signal

- Ignoring the noindex check: verify that none of your 'keep' pages accidentally have a noindex tag before pruning — deleting a page you thought was performing well but was actually noindexed all along is a painful error

- Not updating internal links after consolidation: leaving internal links pointing to redirected URLs creates redirect chain inefficiency and splits your crawl signal

- Pruning before building a baseline: if you have not documented your pre-pruning performance metrics, you cannot measure whether the pruning worked

GEO Angle — How Content Pruning Improves AI Visibility

A cleaner content index is not just better for Google — it is better for every AI system that sources from the web

Content pruning in 2026 carries a dimension of value that did not exist when the strategy was first documented: its impact on GEO (Generative Engine Optimization) and AI citation eligibility. Google AI Overviews, Perplexity, and ChatGPT source their answers from indexed web content — specifically from content that their systems evaluate as authoritative, fresh, and genuinely informative. A site cluttered with thin, outdated, or duplicated content is less likely to be cited by AI systems, because those systems apply quality filters to their source material that are at least as rigorous as Google's search ranking filters.

When you prune a site's content inventory to concentrate authority on fewer, higher-quality pages, you are simultaneously:

- Increasing the topical depth of the pages that remain — AI systems favour comprehensive, structured content over fragmented partial coverage of the same topic

- Concentrating internal link equity on a smaller number of pages — making each remaining page more authoritative in Google's evaluation and, by extension, more likely to be selected as an AI source

- Improving crawl consistency on your best content — AI systems refresh their knowledge from web crawls, and pages crawled more frequently are more likely to reflect current information

- Eliminating content that provides incorrect or outdated answers — AI systems that cite outdated content risk surfacing misinformation, which their quality filters are increasingly designed to prevent

The Update Decision and GEO Readiness

Of the four pruning decisions, the UPDATE decision has the most direct GEO impact. When you update a page with current statistics, refreshed examples, a new FAQ section with FAQPage schema, and current structured data, you are creating exactly the content profile that AI systems prefer to cite: fresh, structured, question-answering, and authoritative.

For every page that receives an UPDATE decision in your audit, add the following GEO-readiness tasks to the update protocol:

- Add or refresh a FAQ block with at least 4 questions answered in direct, extractable format — and mark it up with FAQPage schema

- Update all statistics to reflect 2026 data — outdated statistics are one of the primary reasons AI systems deprioritise content as a citation source

- Add an 'Article' or 'BlogPosting' schema with a current dateModified timestamp — this signals freshness directly to AI crawlers

- Include at least one specific, original claim or data point that cannot be found on other pages — the Information Gain standard applies to AI citation as much as it applies to traditional rankings

- Structure the opening 150 words to directly answer the primary question the page addresses — AI systems frequently extract the opening section of an article when synthesising responses

Post-Pruning Monitoring — A 90-Day Recovery Protocol

The pruning is not over when the redirects go live. It is over when the performance data confirms improvement.

The post-pruning monitoring period is where most teams make a critical error: they either panic at early volatility and reverse changes prematurely, or they declare success at 30 days without verifying that performance has genuinely stabilised and improved. Neither response serves the long-term quality of the site.

Expect a modest traffic dip in the first 2 to 4 weeks after a significant pruning exercise. This is a documented and expected outcome — Google is re-evaluating the site's quality composition, and the recalibration produces short-term volatility before the quality signal improvement translates into ranking gains. A dip of 5–15% in the first two weeks is normal. A dip of 30%+ requires immediate investigation.

| Window | What to Monitor | Action Trigger |

|---|---|---|

| Days 1–7 | Verify technical execution: confirm 301/410 responses are returning correct status codes. Run Screaming Frog to catch any broken internal links pointing to pruned URLs. Check GA4 for any unexpected traffic drops on non-pruned pages. | Any page now returning 4xx that was not in your delete list → check redirect map. Any major non-pruned page losing significant sessions → audit for internal link dependency issues. |

| Days 8–30 | Monitor Search Console index coverage: pruned pages should begin dropping from the index. Track impressions for your KEEP and UPDATE pages — these should stabilise or begin growing as crawl budget redistributes to high-quality content. | Index coverage showing large numbers of 'Excluded — crawled, not currently indexed' on non-pruned pages → may indicate a quality signal issue affecting the broader site. Investigate before expanding the prune. |

| Days 31–60 | Measure organic traffic change vs your pre-audit baseline for the same pages. Expect some initial volatility — a modest dip (5–15%) in the first 30 days is normal as Google reprocesses the site. By Day 60, traffic should be recovering toward or above baseline. | Traffic still declining below 85% of baseline at Day 60 → investigate whether any pruned pages had unexpected authority value. Check for redirect chains or missed high-traffic pages in the delete batch. |

| Days 61–90 | Full performance assessment: compare organic sessions, impressions, and conversions for your top 20 pages against pre-audit baseline. Validate that kept and updated pages are performing better than before. Assess crawl rate change in GSC Crawl Stats. | No improvement in overall site impressions after 90 days → the pruning may have been insufficient. Consider a second round targeting additional weak pages. Or: your KEEP pages need deeper content updates to benefit from the redistributed crawl budget. |

Content Pruning for Nigerian & African Websites — The Specific Context

The principles of content pruning apply universally, but Nigerian and African websites have some specific characteristics that shape how the process should be approached.

The Low-Volume Data Problem

Many Nigerian business websites have relatively low organic traffic compared to equivalent Western-market sites — not because their content is worse, but because the search volume in their target markets is lower and the competitive landscape is still developing. This creates a data interpretation challenge: a page with 80 organic sessions per month may score as 'Weak' in our scoring matrix, but in the context of a Nigerian market keyword with a total monthly search volume of 300, it is actually performing well.

The correction: when auditing Nigerian websites, always contextualise traffic data against market search volume. A page receiving 80 sessions on a keyword with 300 monthly searches has a 27% traffic share — an exceptional performance. The same 80 sessions on a keyword with 50,000 monthly searches is a failure. Use Google Keyword Planner to document search volume for each page's target keyword alongside the traffic data in your inventory.

The Recency Gap

Nigerian blogs frequently have significant content gaps in their publication history — periods of 6 to 18 months with no new content, followed by bursts of publishing. This creates clusters of content from specific periods that share similar publication dates, similar writing styles, and similar levels of outdatedness. These clusters are the natural starting point for an UPDATE audit: they represent pages that were once strategically relevant, have some organic history, but need substantial freshness investment to compete in 2026's search environment.

English-Plus-Local-Language Consideration

For websites that have begun publishing content in Yoruba, Hausa, Igbo, or Pidgin alongside English, the pruning framework applies differently. Local-language content has almost no competition — meaning that even thin local-language pages may rank well simply because of scarcity. Before applying the scoring matrix to local-language content, verify whether the page is ranking and receiving traffic in its target language. If it is — even with a low word count — it may warrant a protect-and-expand decision rather than a standard scoring band response.

The Complete Content Audit Execution Checklist

| PHASE 1 — INVENTORY BUILDING (WEEK 1–2) | |

|---|---|

| ☐ | Export all URLs from Google Search Console (Performance → Pages, last 12 months, export to CSV) |

| ☐ | Run a full Screaming Frog crawl of your domain — export with status code, title, H1, word count, canonical, internal link count |

| ☐ | Export referring domain data by URL from Ahrefs Site Explorer → Best by Links |

| ☐ | Export GA4 organic sessions by landing page (last 12 months, organic channel filter) |

| ☐ | Export GA4 conversion data by landing page (last 12 months) |

| ☐ | Merge all exports into one Google Sheet — one row per URL, one column per data metric |

| ☐ | Flag all URLs with 5+ referring domains — these require individual review regardless of score |

| ☐ | Identify and flag any URLs with a noindex tag in your Screaming Frog export — these are not indexed and should not be in your scoring matrix |

| PHASE 2 — SCORING AND DECISION-MAKING (WEEK 2–3) | |

|---|---|

| ☐ | Apply the Semola Content Scoring Matrix to every URL: score each of the 8 metrics, total the score, assign a score band (27–32: Keep / 21–26: Update / 14–20: Consolidate / 8–13: Delete) |

| ☐ | Apply the backlink override rule: any URL with 5+ referring domains is automatically moved to 'Keep' or 'Update' regardless of score |

| ☐ | Identify keyword cannibalization clusters: group all URLs by primary keyword intent — any group with 3+ pages targeting the same query is a consolidation candidate |

| ☐ | Finalise your four decision lists: KEEP, UPDATE, CONSOLIDATE, DELETE — with specific action noted for each |

| ☐ | For all CONSOLIDATE decisions: identify the master URL (highest referring domains + traffic) and the source URLs to be merged and redirected |

| ☐ | For all DELETE decisions: confirm 0 referring domains before scheduling deletion. Any URL with referring domains moves to CONSOLIDATE or UPDATE |

| ☐ | Document your pre-pruning baseline: total organic sessions (last 30 days), total Search Console impressions (last 30 days), total indexed pages from GSC Coverage report. Save this data before any changes are made |

| PHASE 3 — EXECUTION (WEEK 3–8, BATCHED) | |

|---|---|

| ☐ | Execute DELETE decisions first: implement 410 Gone (no backlinks) or 301 redirect (any backlinks) for each. Batch: maximum 50 URLs per week. Verify status codes with Screaming Frog after each batch. |

| ☐ | Remove all internal links pointing to deleted URLs — update them to point to the nearest relevant live page |

| ☐ | Execute CONSOLIDATE decisions: merge content into master page, implement 301 redirects from source URLs, update all internal links from source URLs to master URL |

| ☐ | Submit your updated XML sitemap to Google Search Console after completing Phase 1 deletions and consolidations |

| ☐ | Execute UPDATE decisions: refresh content, update statistics, add/update FAQPage schema, update dateModified in Article schema, resubmit via URL Inspection |

| ☐ | Verify your GEO readiness additions on all updated pages: FAQ block with schema, fresh data points, information-dense opening paragraph, correct Article schema with updated dateModified |

| ☐ | Monitor GSC crawl stats and index coverage report daily for the first 14 days post-execution |

Conclusion: A Leaner Site is a Stronger Site

The metaphor in the name is exact. When you prune a tree, you do not make it smaller for the sake of smallness. You remove the branches that are drawing nutrients from the root system without producing fruit or foliage — so that the branches that remain can grow stronger, reach further, and produce more than they could on a neglected tree with deadwood throughout.

A content audit is the process of finding your deadwood. Our Content Scoring Matrix gives you an objective, consistent, data-driven way to identify it without relying on instinct or impression. The four decision types — Keep, Update, Consolidate, Delete — give you a precise execution protocol for each page. The monitoring framework gives you the data to verify that the pruning is working and the early warning triggers to course-correct if it is not.

The business case is well documented: a 23% increase in organic traffic from pruning alone. A 32% traffic boost without publishing a single new article. Rankings improvements on strong pages within 60 to 90 days of removing the weak ones. These results are not exceptional — they are the expected outcome of a correctly executed content pruning project, because they reflect exactly how Google's quality systems respond to a cleaner, higher-quality content inventory.

The sites that compound their organic authority over time are not the ones that publish the most content. They are the ones that publish selectively, maintain ruthlessly, and prune systematically. Build that discipline into your editorial calendar — annual for established blogs, semi-annual for high-volume publishers — and content pruning becomes not a remediation exercise but a competitive habit.

📋 Article Summary: The Content Pruning Framework

- Content bloat is an active liability: thin, outdated, and duplicated pages dilute crawl budget, suppress site-wide quality signals, and create keyword cannibalization — all of which hurt your strongest pages

- The audit starts with a complete URL inventory: merge GSC, GA4, Screaming Frog, and Ahrefs data into one master spreadsheet before making any decisions

- The Semola Content Scoring Matrix scores each URL across 8 metrics (32 points maximum): 27–32 = Keep / 21–26 = Update / 14–20 = Consolidate / 8–13 = Delete

- The backlink override rule is non-negotiable: any URL with 5+ referring domains requires individual review and a 301 redirect if deleted — never a 410

- Execute in sequence: Delete first (immediate crawl budget benefit, zero authority risk) → Consolidate second → Update third. Monitor between each phase.

- Use 410 Gone for zero-backlink deletions and 301 redirects for any URL with referring domains. Google removes 410 pages from the index faster than 404s.

- Content pruning directly improves GEO eligibility: a leaner, fresher, better-structured content inventory is more likely to be cited by Google AI Overviews, Perplexity, and ChatGPT

- Post-pruning monitoring: expect 5–15% volatility in weeks 1–2 (normal), recovery to baseline by Day 60, measurable improvement above baseline by Day 90

- Audit cadence: every 6 months for high-volume blogs, annually for lower-volume sites. The first audit is the hardest — subsequent audits are faster on a cleaner inventory.

Frequently Asked Questions

Questions readers ask about this topic

The FAQs below are pulled directly from this article's structured content and are designed to help readers quickly find answers to common questions related to the topic.

Will deleting pages hurt my domain authority?

How do I decide between consolidating two pages and updating one of them individually?

We have a page from 2019 with 35 referring domains but almost no traffic. Should we delete it?

How long should I wait before running my next content audit?

A page on our site ranks well for a keyword we no longer want to target. Should we delete it?

Founder, Technical Analyst

Oladoyin Falana is a certified digital growth strategist and full-stack web professional with over five years of hands-on experience at the intersection of SEO, web design & development. His journey into the digital world began as a content writer — a foundation that gave him a deep, instinctive understanding of how keywords, content and intent drive organic visibility. While honing his craft in content, he simultaneously taught himself the building blocks of the modern web: HTML, CSS, and React.js — a pursuit that would eventually evolve into full-stack Web Development and a Technical SEO Analyst.

Follow me on LinkedIn →